KM's OCR and whether it might someday integrate with Monterey's Live Text was discussed in the following thread when Monterey was in Beta, but I want to make a fresh comment now that Monterey is out. (Hooray, KM 10.0 here!)

It appears that KM 10.0 continues to use its own OCR engine rather than the new Monterey Live Text OCR Engine. I guess that's not a surprise, but I've moved on. In other words, all my OCR needs in KM are met by Monterey's "Live Text" feature. And I use it a lot. It seems to have FAR better accuracy and seems to work much faster. Here's how others can copy my success. First, the short explanation:

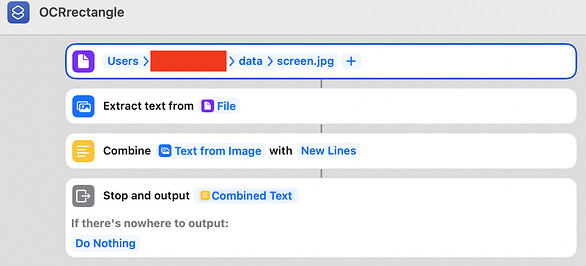

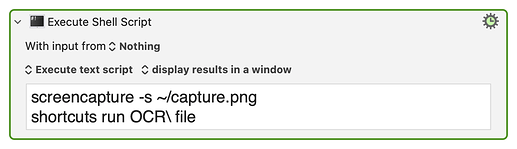

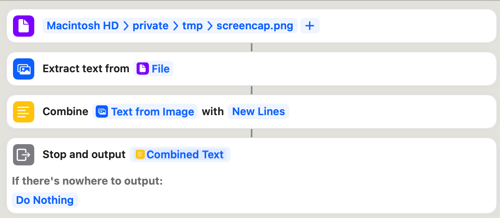

Step 1: You create a Shortcut in the Shortcuts App in Monterey called "OCRscreen" which performs an OCR of the screen and returns the result.

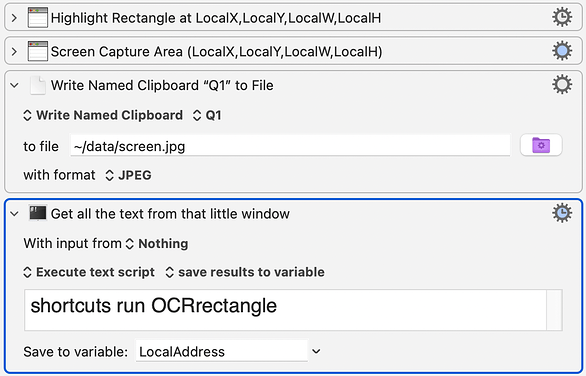

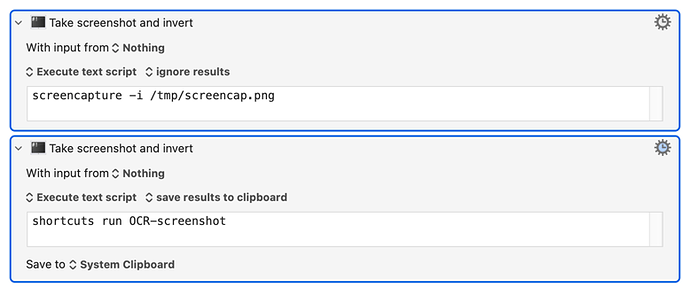

Step 2: You create an Execute Shell Script action in KM which executes this: "shortcuts run OCRscreen" and saves the result into a variable.

That's the basic idea. But that is missing some important details. For one thing, do you really want the entire screen OCR'd? Usually it's better to isolate a specific region. But keep in mind that Monterey's OCR is very fast. Something that took 30 seconds with KM's engine took me only 1 second with Monterey's Engine. Monterey is also amazingly accurate: it can even read text that looks "handwritten." So because of its speed you probably can get away with reading the whole screen.

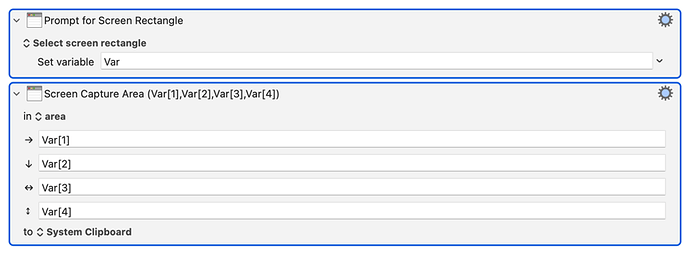

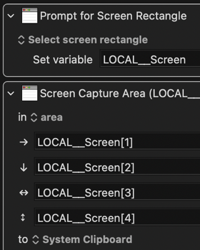

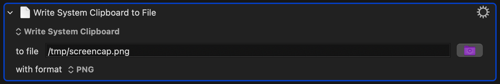

However it seems like such a waste to OCR the whole screen, so I've created a Monterey Shortcut called OCRrectangle which does OCR only on a portion of the screen. While it's possible to pass the coordinates to the Shortcut, and I have successfully done that, I find it to be easier to have the area of interest captured by KM and sent to Monterey's Shortcut utility as a file. So that's what I'm going to show here.

Next, in order to call this shortcut, you need to use KM to capture the relevant portion of the screen and then call the shortcut, perhaps like this example:

This approach has been so fantastic, it has changed everything for me. It's so fast and accurate that it feels like KM now has a human trigger reading the screen. Moreover, I can run this OCR in an infinite loop without any apparent system sluggishness, and my M1 Mac doesn't even get warm (I think Intel Macs can also access Live Text.) For me this has been a game changer.

Perhaps later I may post some of the higher level routines that exploit this. For example I have a macro that stores all kinds of triggers and actions in a Dictionary, so that I can specify, for example, "if you see the words "Are you sure you want to quit? then click on Yes."" In fact, my macros can actually FIND the location of words on a screen, which is something neither KM's OCR nor Monterey's OCR can do. And that's amazing too!

I have just one complaint: while Monterey's OCR can read an entire screen in one second, the screen capture action in KM takes two seconds to complete. This feels so strange, because capturing a screen and saving it to file should be about 10 times faster than actually reading/processing it, especially since processing it requires reading the screen from file. Is there anything Peter can do to speed up the screen capture action? (Perhaps there's an API for screen capturing instead of calling the screencapture utility in macOS.)

I'm on a self-imposed sabbatical from these forums for a year because I don't seem to get along well with people, but I'm breaking that today to provide these cool ideas to the community. And if the community likes these ideas, it may help to get KM to incorporate Monterey's OCR.